NodeTool Studio — desktop

Mac, Windows, Linux. Local inference via Ollama, MLX, and GGUF. Works offline. Prompts and outputs stay on disk. BYOK for cloud providers when you want them.

Download Studio →The open creative AI workspace

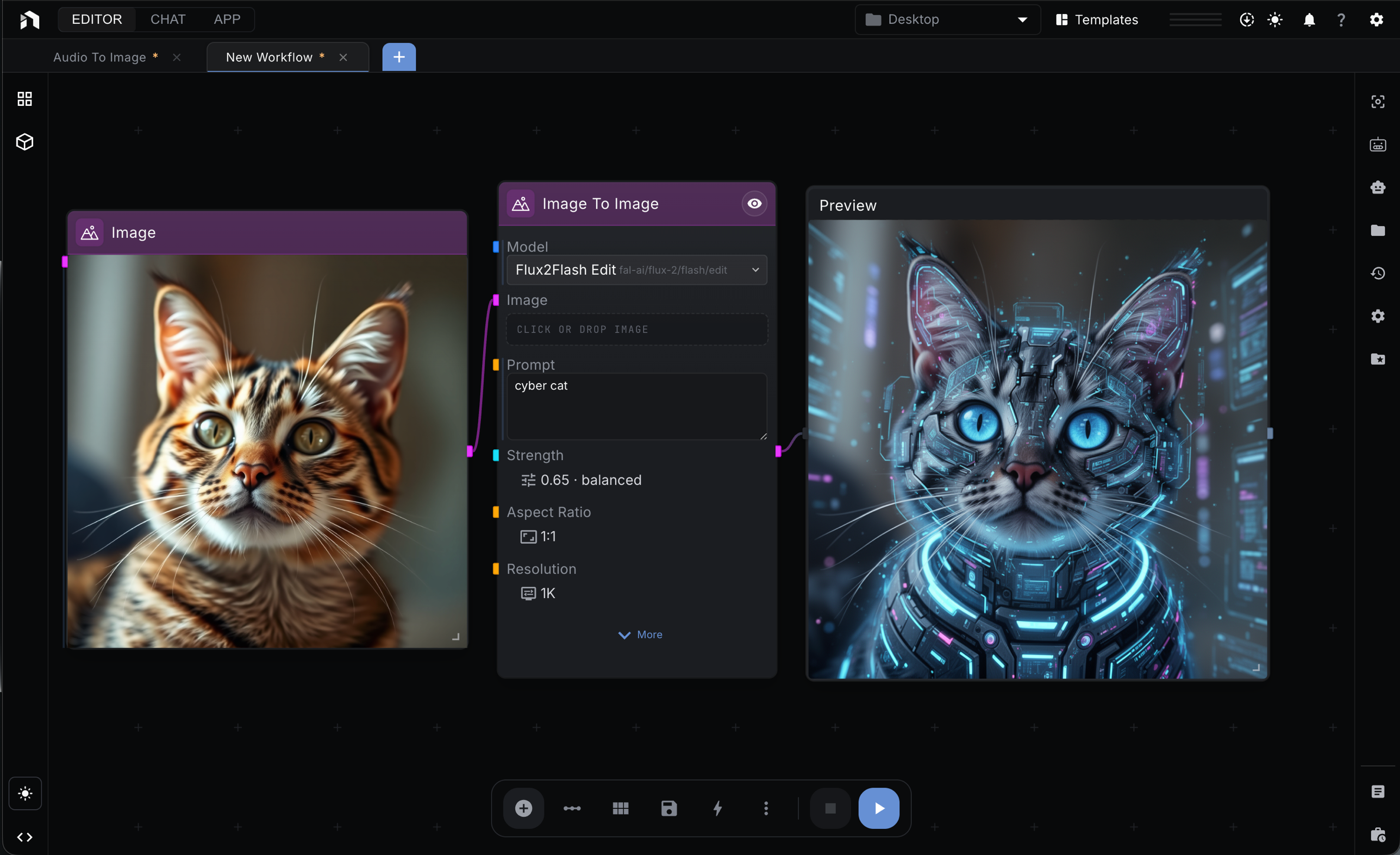

NodeTool replaces the chatbox with a node canvas where image, video, audio, and LLM models run side by side. Bring your keys, or run everything locally. Open source, AGPL-3.0.

Same code, same workflows. Both AGPL-3.0.

Mac, Windows, Linux. Local inference via Ollama, MLX, and GGUF. Works offline. Prompts and outputs stay on disk. BYOK for cloud providers when you want them.

Download Studio →Hosted, no install. Same canvas, same nodes. BYOK for every cloud provider — OpenAI, Anthropic, Gemini, Replicate, FAL, ElevenLabs, HuggingFace. No local models.

Open Cloud →No credit markup. Cloud hosts the same code in this repo. Self-host the Docker images any time. You pay providers directly.

Flux, Qwen, Wan, Seedance, Sora, Veo, Kling on one canvas.

Movie Posters →Prompt to storyboard to narration to animation to score.

Story to Video →Music, sound design, narration. ElevenLabs, MusicGen, Whisper in the graph.

Image to Audio Story →Multi-step agents that plan, call tools, and drive pipelines.

Realtime Agent →More patterns — pipelines, data, RAG, email — in the Cookbook.